より大規模なマルチモーダル・モデルや、オンデバイスの生成AIおよびエージェント型AIによってAIワークロードが増大するにつれ、エッジ・システムには、単なるコンピューティングの強化以上のものが必要になっています。必要なのは、リアルタイム性能、低消費電力、強力なデータ・プライバシー、スケーラビリティを実現する専用のアクセラレータです。Ara240ディスクリート・ニューラル・プロセッシング・ユニット (DNPU) は、こうしたエッジAIの要求を満たすよう構築されています。

NXP初のディスクリート・ニューラル・プロセッシング・ユニット (DNPU) であるAra240は、最大40 eTOPS (equivalent Tera Operations Per Second) のAI最適化アーキテクチャ、大容量オンチップ・メモリ、高いオフチップ帯域幅を備えています。つまり、高度なAI、LLM、ビジョン言語モデル (VLM)、マルチモーダル言語モデル、次世代エッジ推論を実行するために特別に設計されています。

産業オートメーション・システム、自律型ロボット、スマート・インフラ、高度なヒューマン・マシン・インターフェース (HMI) プラットフォーム、エッジ・サーバーのいずれを設計する場合でも、Ara240 DNPUは最新のAIワークロードを直接エッジで実行するために必要な性能面の余裕を提供します。

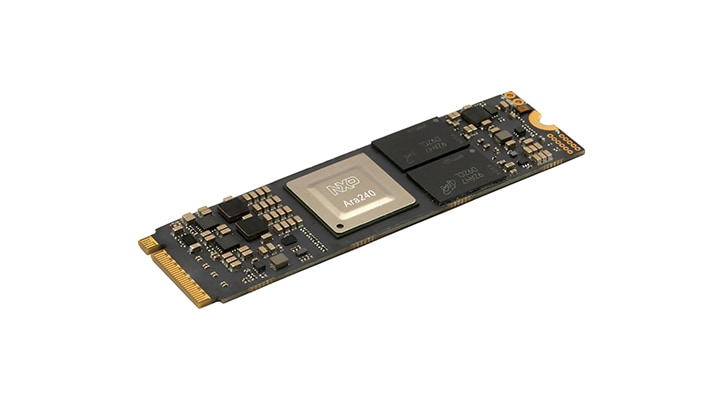

図1. Ara240 DPNUは、高度なAIアプリケーション向けにリアルタイムのオンデバイス推論を可能にします

図1. Ara240 DPNUは、高度なAIアプリケーション向けにリアルタイムのオンデバイス推論を可能にします

Ara240 DNPUの設計がエッジの高度なAIにどのように特化しているか

Ara240 DNPUは、レイテンシ、プライバシー、電力効率が重要となる、要求の厳しいオンデマンドAIアプリケーション向けに特別に設計されています。畳み込みニューラル・ネットワーク (CNN)、トランスフォーマー、LLM、VLM、マルチモーダル・モデルなど、特に広く使用されているAIモデル・アーキテクチャをサポートしているため、開発者はクラウド・コンピューティングに依存することなく、高性能な生成AIを組込みシステムやエッジ・システムに導入できます。

DNPUの主な技術的特長は次のとおりです。

- 最大40 eTOPS (equivalent TOPS):ホスト・プロセッサからオフロードされた複雑な並列AIワークロードを実行できる高いスループット

- 大容量オンチップ・メモリ+専用LPDDR4インターフェース(最大16 GB):ホスト・メモリとの競合を増やすことなく、より大規模なモデルとより高い帯域幅の処理をサポート

- PCIe Gen4 x4およびUSB 3.2 Gen1ホスト・インターフェース:柔軟で高速な統合を実現

- セキュア・ブートとハードウェアの信頼の基点:安全なAIパイプラインと保護された展開を実現

- LinuxとWindowsのランタイム・サポート:エッジ・システムに幅広い互換性を提供

- フレームワーク・サポート:TensorFlow、PyTorch、ONNXなど

スケーラブルなAIコンパニオン・プロセッサとして設計されたAra240 DNPUは、複雑な推論をローカルで実行するために利用するシステムに強力なAIアクセラレーションをもたらし、レイテンシの低減、クラウド・コストの削減、データ・プライバシーの強化を実現します。

Ara240 16 GB M.2モジュールでプロトタイプ作成を迅速化

開発者がAra240 DNPUを迅速に評価できるように、NXPはM-Key PCIe (Peripheral Component Interconnect Express) インターフェースを備えた任意のホスト・プラットフォームにシームレスに統合できるように設計されたAra240 16 GB M.2モジュールを提供しています。

モジュールの特長は次のとおりです。

- 最大40 eTOPSのAI性能

- 最大900 MHzで動作する独自のニューラル・ネットワーク・プロセッサ

- 16 GB LPDDR4 (Low-Power Double Data Rate 4) メモリ

- M.2 2280 M-Keyフォーム・ファクタ

- PCIe Gen4 x1/x2/x4構成

- 現在、i.MX 8M Plusおよびi.MX 95アプリケーション・プロセッサに対応

このモジュールは、Ara240の性能を評価し、概念実証の開発を加速し、高性能AIを既存の設計に統合するための効率的な道筋を提供します。Ara240 16 GB M.2モジュールは、2026年6月にnxp.comおよび販売代理店から入手可能になります。

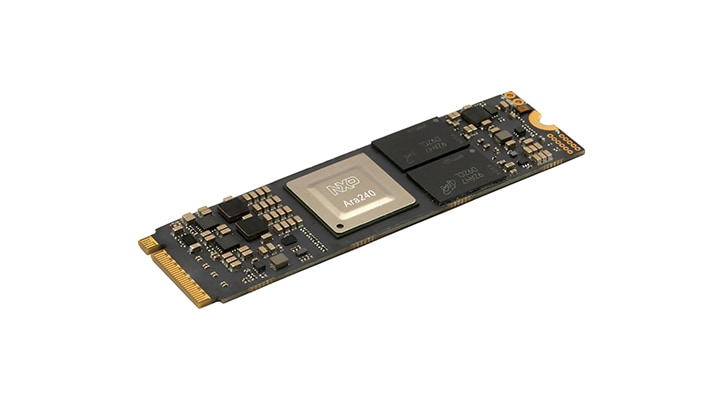

図2. 開発者はAra240 16 GB M.2モジュールを使用すると、プロトタイプを迅速に作成できます

図2. 開発者はAra240 16 GB M.2モジュールを使用すると、プロトタイプを迅速に作成できます

優れた組み合わせ:Ara + i.MX

Ara240 M.2モジュールは、i.MXアプリケーション・プロセッサのAIコプロセッサとして使用できます。

この適応性により、現在NXP MPUを使用している開発者は、Ara240 DNPUをコンパニオン・アクセラレータとして使用して簡単にAI性能を大幅に向上させることができます。

コンパクトでスケーラブルなAra240アクセラレータ・モジュールを提供しているパートナー・エコシステム

NXP Ara240 M.2モジュールに加えて、NXPのエコシステム・パートナーはAra240ベースの独自のモジュールをリリースしています。

これらのモジュールを使用すると、Ara240 DNPUをさまざまな熱、機械、性能の構成で簡単に評価でき、産業向けPC、ロボティクス・システム、コンパクトな組込みエッジ・デバイスなどのアプリケーションがサポートされます。これらのボードは、早期の評価から完全なシステム設計プロセスに至るスムーズな開発パスをサポートします。

物理世界でAIを実現するための専用ソフトウェア

NXPのeIQ® Agentic AI Frameworkは、エッジで専用NPUアクセラレーションを活用するために特別に設計された機能でeIQ AIソフトウェア開発環境を拡張します。このフレームワークは、ビジョン、言語、制御など、複数のモデルを調整し、推論と意思決定を汎用CPUではなくハードウェア・アクセラレータに効率的にマッピングすることで、エージェント型AIワークロードをデターミニスティックかつリアルタイムに実行できるようにします。

ハードウェアを考慮したモデルの準備、最適化されたオーケストレーション、安全なオンデバイス実行を組み合わせることで、NXPのeIQ Agentic AI Frameworkは、DNPUが自律的な生成AIワークロードのための低レイテンシと予測可能な性能を維持し、クラウドへの依存を軽減しつつ、複雑なマルチモーダル・エッジAIシステムの展開を簡素化できます。

Ara240 DNPUと拡大するM.2モジュールのエコシステムにより、開発者は次のメリットを得ることができます。

- スケーラブルなAI性能

- リアルタイムの推論機能

- ローカル処理によるプライバシー強化

- 運用コストとクラウド・コストの削減

- 進化するモデル・アーキテクチャをサポートする柔軟性

スケーラブルなメモリと帯域幅を備え、最大40 eTOPSを実現するNXP初のDNPUであるAra240で、エッジでのリアルタイムのオンデバイスAIを加速させましょう。Ara240 DPNUの詳細をご覧ください。